Best Selling Products

Concerns about AI "misdiagnosis": Google drastically reduces automated summaries for medical queries.

Nội dung

AI has helped users access information faster, but in the healthcare field, speed can come at the cost of accuracy. Google acknowledges the risks and is adjusting how its AI handles sensitive queries.

Concerns about AI "misdiagnosis": Google drastically reduces automated summaries for medical queries.

AI has helped users access information faster, but in the healthcare field, speed can come at the cost of accuracy. Google acknowledges the risks and is adjusting how its AI handles sensitive queries.

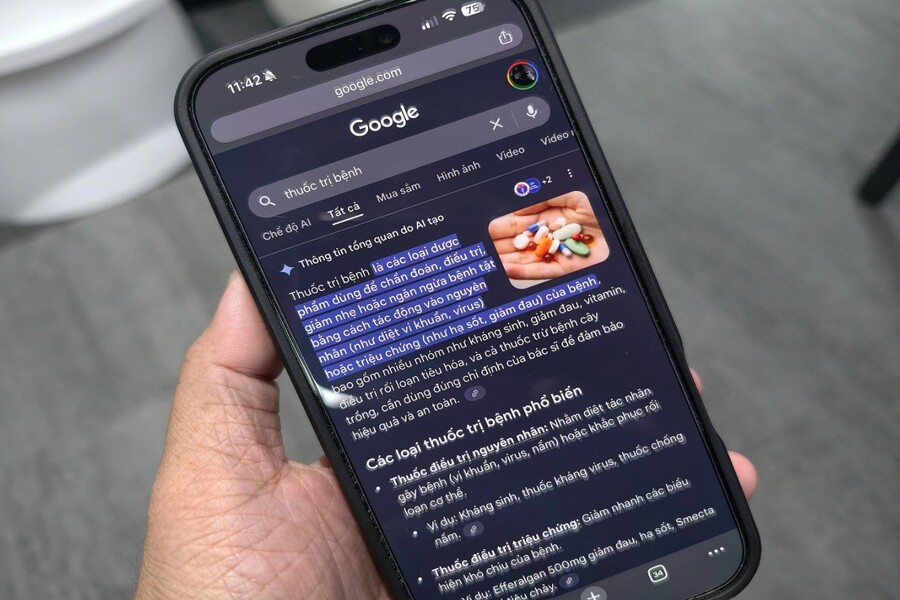

With a simple Google search query, users can find answers to almost any question: from how to fix a washing machine to information about complex diseases. As artificial intelligence (AI) becomes more deeply integrated into the search engine, Google's ambition becomes even clearer: not just to guide users to information, but to directly summarize and "explain" it for them.

However, AI has begun to touch upon the most sensitive area: human health. Recently, Google has started restricting the display of AI-generated summaries for health-related queries, after numerous medical experts and independent organizations warned about the serious potential harm to users.

This decision comes amid a series of findings from The Guardian showing that AI-generated aggregated results are not only inaccurate, but in some cases can push patients into dangerous choices. This is not just a story about technological errors, but also a wake-up call about how we are entrusting our faith in AI systems in life-or-death situations.

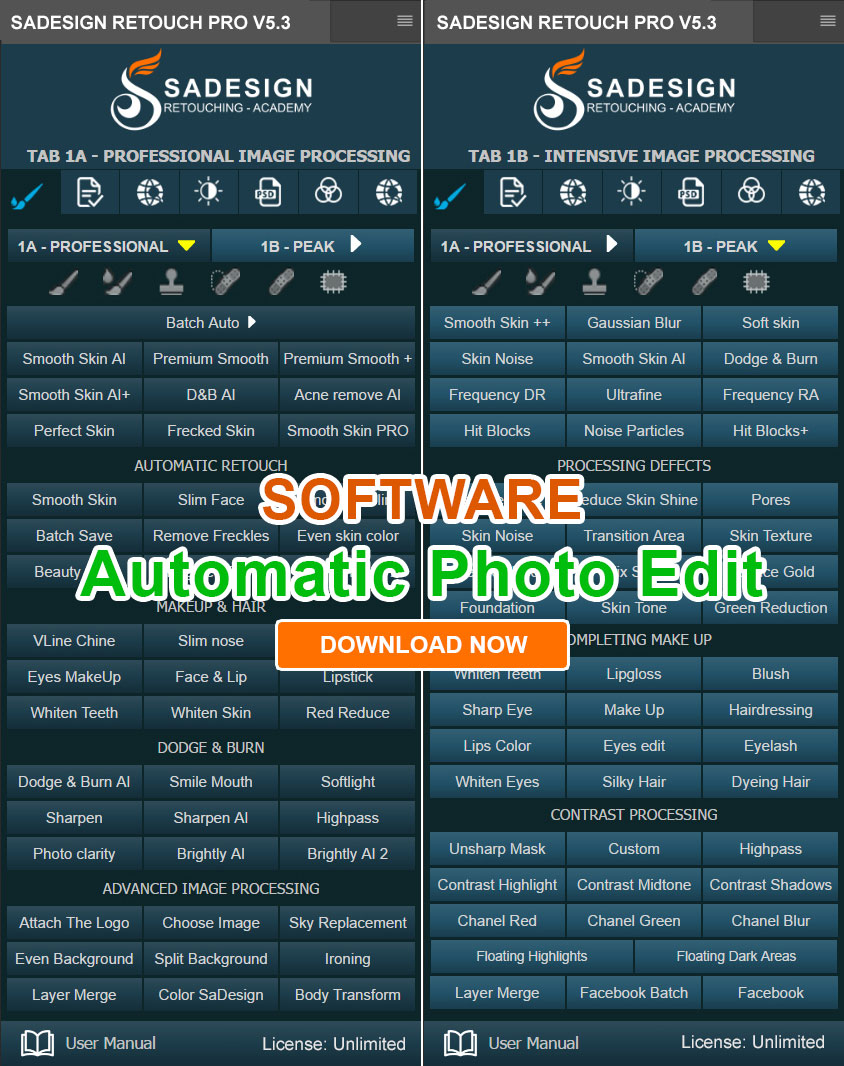

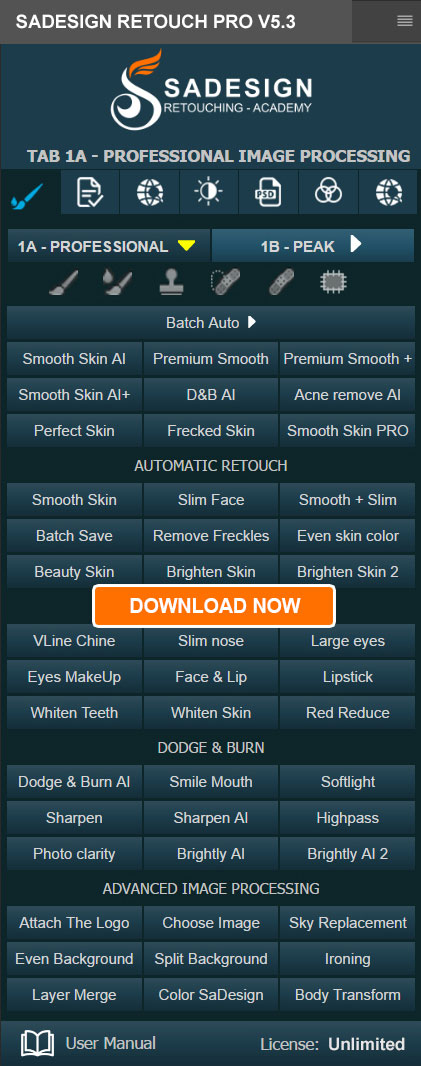

Buy Genuine Licensed Software at Affordable Prices

In the fields of design and user experience, shortening the information acquisition journey is always considered the ultimate goal. Google's AI-powered summarization feature, often appearing at the top of search results, is clear evidence of this philosophy. Instead of having to read multiple articles and compare different sources, users can grasp the "general answer" with just one glance.

In fields like travel, cooking, or life hacks, this approach can offer clear benefits. But in healthcare, where each individual has different medical conditions, histories, and constitutions, simplifying information can sometimes be a double-edged sword.

The problem isn't that the AI is completely "wrong," but rather that it's very convincingly wrong. The clear language, neutral tone, and concise presentation easily lead users to believe this is verified information, a "final conclusion." In reality, it's just a aggregation of probabilities from training data, not personalized medical advice.

1. Google begins restricting AI display for health searches.

Following growing warnings from experts, Google has confirmed that it has been removing some AI-generated summaries from health-related queries. This marks a significant setback in its much-touted AI Search strategy.

Reportedly, many queries that previously displayed automatic summaries now only lead to traditional links, forcing users to read and cross-reference information from different sources. Google also emphasized that, regardless of AI assistance, users should still consult a professional or doctor before following any medical information.

However, this move doesn't mean Google is completely abandoning its AI ambitions in healthcare. Instead, it reflects a rare acknowledgment: AI is not yet safe enough to serve as a "direct answerer" for medical questions.

.jpg)

2. Shocking examples

The Guardian 's findings have highlighted a series of instances where Google's AI-powered summaries provided inaccurate information, even contradicting mainstream medical recommendations.

2.1. Pancreatic cancer and misinformation about diet

One of the most serious examples involves pancreatic cancer, a disease that already has a high mortality rate and requires specialized care. Google's AI summary once advised patients to avoid high-fat foods.

However, medical experts quickly refuted this information. In fact, many pancreatic cancer patients have difficulty absorbing nutrients and need a high-energy diet, including fat, to maintain their condition. Cutting back on fat is not only unhelpful but also increases the risk of wasting and death.

A piece of bad advice, if believed completely, can be the deciding factor between life and death.

2.2. Liver function tests

Furthermore, AI summaries have been found to display misleading information about liver function test results. In some cases, the summaries misled users, including those with serious liver disease, into believing their results were completely normal.

.jpg)

In medicine, interpreting test results shouldn't be based on a single number, but rather on the patient's overall context. AI's ability to simplify and "draw conclusions" on behalf of doctors inadvertently eliminates this complexity, creating a false sense of security for patients.

2.3. Cancer screening in women and the risk of missing symptoms

Search results related to cancer screening for women were also found to contain inaccurate information. Some AI summaries provided misleading descriptions of the meaning of test results, potentially leading users to overlook important symptoms.

In the field of oncology, where early detection can determine survival chances, delays due to misinformation pose a significant risk.

.jpg)

In response to criticism, Google acknowledged that some of the AI-generated data that was criticized for errors still linked to supposedly reputable sources. This suggests the problem lies not simply in the quality of the source, but in how the AI interprets, filters, and restructures the information.

A medical article may be accurate in its full context, but when partially extracted, omitting important conditions, exceptions, and notes, it can become misleading information. AI, aiming to generate concise and easily understandable answers, often trades medical accuracy for brevity.

Google says it has temporarily removed AI summaries from many health-related search queries, including popular ones like “What are the normal values for a liver blood test?” or “What are the normal values for a liver function test?”. These are seemingly harmless questions, but they can have serious consequences if answered incorrectly or without context.

3. Just change the way you ask the question, and AI reappears.

Despite Google's efforts to limit its use, many experts remain concerned. The reason is that even a slight change in the way questions are phrased could cause the AI summary to reappear.

This shows that controlling AI is not simply a matter of "turning" a switch on or off. With millions of question variations and different wording, ensuring that every health-related query is processed securely is a huge challenge.

Notably, some of the inaccuracies mentioned in the original report have not been completely removed, including information related to cancer and mental health.

.jpg)

Before its health-related inaccuracies were exposed, Google's AI-powered summary feature was already controversial due to a series of illogical suggestions. At one point, the AI even advised users to eat ice to supplement minerals, or suggested applying glue to pizza to make the cheese stick better.

These examples were once considered "technological jokes," reflecting the unfinished experimental stage of AI. However, when the same mechanism is applied to medical information, the nature of the issue completely changes. It's no longer harmless humor, but a direct threat to human health and life.

Google's restriction of AI-powered summary features for health-related searches is a necessary, albeit somewhat belated, step. It demonstrates that even the largest tech corporations must confront the limitations of AI when entering fields that demand high accuracy, ethics, and accountability.

In the future, AI will continue to play a crucial role in supporting healthcare. But at present, completely entrusting health information to automated summarization systems is a risky gamble.

For users, the takeaway is: no answer on Google can replace a doctor's advice. And for AI designers, engineers, and developers, it's a reminder that a good experience isn't just about speed and convenience, but also about safety and responsibility.

Buy Genuine Licensed Software at Affordable Prices